The Cognitive Debt Problem

Students are performing better with AI, but retaining less in certain contexts. That gap has a name.

Thanks to our Presenting Sponsors Hire Education, Tuck Advisors, and Cooley, for making Edtech Insiders possible.

The Cognitive Debt Problem

Sponsored by Overdeck Family Foundation

By: Rui Lin Ler

Special thanks to Overdeck Family Foundation for sponsoring this article, the first of eight in our AI & Efficacy Editorial Research Series diving into key research findings from Stanford’s AI Hub for Education Research Repository (a project by Stanford’s SCALE Initiative). Stay tuned for more!

The promise of AI in education is real: personalized explanations and reduced barriers for students who previously had no support. But Stanford’s SCALE Initiative recently reviewed 20 causal studies on AI in K-12 education — one of the most rigorous syntheses of the evidence to date. Their headline finding: AI tools improve student performance while students have access to them, but those gains weaken or disappear when students are assessed independently. The question of whether AI is supporting learning or quietly replacing it is no longer rhetorical. The evidence is emerging.

The Numbers Look Fine… Until They Don’t

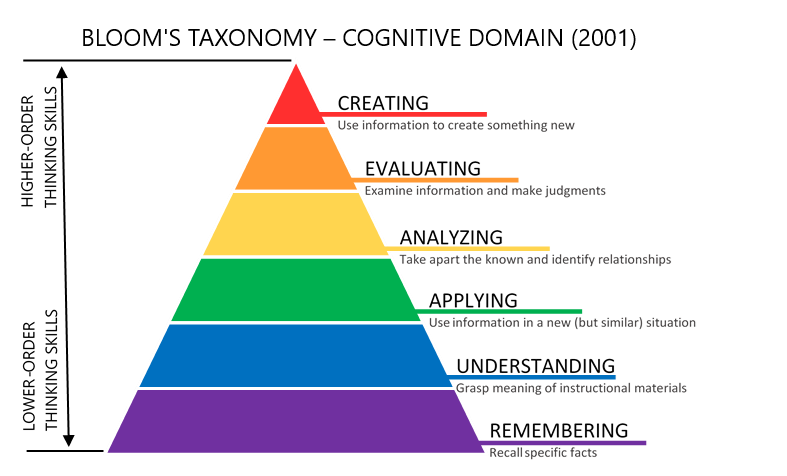

To understand why this matters, it helps to use a framework most educators already know: Bloom’s taxonomy. Learning isn’t flat. It builds from the bottom up: remembering and understanding at the base, then applying, analyzing, evaluating, and creating at the top. Durable learning has always meant moving up the pyramid, building on incremental gains.

AI is doing something very specific to this pyramid. It’s accelerating performance at the lower tiers while leaving the upper ones largely untouched… and sometimes even making them harder for students to reach.

In one randomized study, a group of students using AI outperformed a control group that was not using AI by 22 points on immediate recall and comprehension tasks — exactly the bottom rungs of Bloom’s. That’s the kind of result that shows up in a grade book and looks like success. But three weeks later, the same gap between these groups had collapsed to 6 points and was no longer statistically significant. For higher-order tasks — synthesis, evaluation, application — the control group without AI led at every single timepoint.

Another study found that students who used ChatGPT to improve their essays showed significant score gains compared to peers who didn’t — but zero difference in knowledge gain or transfer when tested afterward. Offloading the bottom of Bloom’s pyramid did not free students to wrestle with deeper learning or perform better at the top. The essay improved. The same cannot necessarily be said about student understanding.

This is the core measurement problem facing schools right now. Most assessments capture performance at the moment of submission. They aren’t designed to catch cognitive debt, because the debt comes due later — after the unit, after the term, sometimes after graduation. When schools evaluate AI tools against grade outcomes, they’re looking at exactly the metric most likely to give a false positive.

What’s Actually Being Offloaded

One of the most striking bodies of evidence on this question doesn’t come from test scores. It comes from EEG scans.

In a four-month longitudinal study, researchers tracked brain activity across three groups of students — those writing with ChatGPT, with a search engine, or without any tools. The pattern was consistent and worsening over time. But it wasn’t just a general dimming of brain activity. The research identified specific, meaningful changes:

Brain rhythms associated with deep memory formation (theta and alpha waves) declined across all four months — the oscillations that fire when we struggle to recall something, make a new connection, or work to integrate an idea into what we already know.

Synchronization between executive reasoning and memory retrieval areas weakened — meaning the brain’s core architecture for durable learning was progressively less active.

The outcome was stark. By the final session, 78% of students who had relied on ChatGPT couldn’t quote anything from essays they had written minutes earlier. In the no-AI group, that figure was 11%.

This isn’t a story about effort or motivation. It’s about what happens neurologically when the brain is relieved of certain tasks — repeatedly, over time. The brain is efficient: if a tool will do the remembering, connecting, and evaluating, it will gradually stop doing those things itself.

Process-mining research shows the behavioral counterpart. By tracking exactly which steps students took during AI-assisted writing tasks, researchers mapped where the work goes when AI is present:

Natural Learning Loop: Write → Return to sources → Orient & plan → Evaluate own work → Revise

The AI Loop: Write → Ask AI → Accept output → Ask AI again

The steps that disappear — re-reading, self-evaluation, planning — are precisely the ones that drive encoding and retention. What the EEG data shows at the neurological level, the process-mining data confirms at the behavioral level: the same functions, going quiet.

The Load Reduction Paradox

Reducing cognitive load isn’t inherently bad. In fact, it’s often exactly what good teaching tries to achieve.

Cognitive load is the mental effort required to process new information. Researchers distinguish between two types: extraneous load, the overhead created by poor design, unclear instructions, split attention between sources, and intrinsic load, the difficulty that comes from genuinely grappling with complex ideas. Eliminating extraneous load is unambiguously good; it’s wasted effort that gets in the way of learning. Eliminating intrinsic load is where cognitive debt accumulates — because that struggle is where learning actually happens.

Several studies have found that AI-powered explanations significantly reduce student overwhelm, improve self-efficacy, and make students more likely to engage with difficult material. That’s the real value. In one study, students using AI explanations reported significantly lower cognitive load and higher self-efficacy compared to students using traditional textbook materials, a genuine improvement in how they experienced learning!

But the same study found no meaningful improvement in actual learning outcomes. The comfort was real. The learning gain wasn’t. Reducing the struggle didn’t produce more learning, it produced more ease.

Most AI tools don’t distinguish between the two types of load. They reduce friction broadly — including the productive friction students need to form durable knowledge. The result is a tool that feels better to use and, in many cases, teaches less.

The Capability Prerequisite

There’s an equity argument that often gets made in favor of AI in schools: that it democratizes access to high-quality, personalized explanation, giving every student the kind of patient support that used to require an expensive tutor or an unusually attentive teacher. This argument is compelling but incomplete.

What the data shows is more uncomfortable. In a study of a student-facing AI chatbot deployed specifically to support struggling learners, lower self-efficacy students used the tool most intensively. But 40% of their queries were direct answer requests. More than 80% had no relationship to their enrolled coursework at all. More usage, no score improvement, shallower engagement throughout.

The pattern points to something that rarely gets said plainly: using AI well is itself a skill, and that skill requires baseline knowledge. Students with strong conceptual foundations can use AI as a multiplier — to pressure-test their thinking, explore alternative explanations, identify gaps in their understanding. Students without that foundation tend to use it as a replacement. They can’t evaluate what AI gives them, because they don’t yet know enough to know what’s missing.

This doesn’t make AI inequitable by design. But it does mean that deploying AI without first building prior knowledge structures may be widening the very learning gap it was meant to close. The students who benefit most from AI-assisted learning are, by and large, the ones who need it least.

What Good Design Actually Looks Like

None of this argues for removing AI from classrooms. It argues for being deliberate about when AI appears and what role it plays — and the research is specific enough to offer real guidance.

The most important finding is about sequence. In the four-month EEG study mentioned above, one group of students wrote without AI first, then used it later. These students didn’t just retain more — they used AI better. Their prompts were more precise, their critical integration of AI output stronger, their essays more coherent. Having first built a real knowledge structure, they could deploy AI against it productively.

The risk is not AI’s presence. It’s its persistence.

A few design principles that the research supports directly:

Protect the First Attempt: Before AI enters, students should grapple with the task on their own — even imperfectly. This is when initial encoding happens. Temporal separation (task first, AI second) is one of the simplest and most consistently supported interventions in the literature.

Force the Evaluation Loop: One study found that students who used a structured self-assessment rubric before accessing AI were the only group to significantly increase their own evaluative thinking during the task. A simple checklist — what do I already know, where am I stuck, what am I actually trying to figure out — before AI access keeps metacognitive processes active.

Target the Right Kind of Load: AI explaining a concept before a student attempts a problem is different from AI completing the attempt. The former reduces extraneous load and can genuinely help. The latter reduces intrinsic load and is where debt accumulates. Good design does the first and protects the second.

Give Teachers Visibility: Students can’t see the cognitive debt they’re accumulating — they feel more capable, not less. Teachers can’t intervene on a problem they can’t see. Student prompt logs as formative data aren’t about surveillance, they’re about giving educators the visibility to notice when AI use has drifted from support into substitution.

The Question Schools Aren’t Asking Yet

Right now, students have no signal that cognitive debt is building, and most schools have no metric that would catch it before it compounds.

Students who rely heavily on AI report higher confidence and lower perceived effort. They feel like they’re learning more. The debt is invisible precisely because supported performance feels like real performance — until the scaffold is removed and there’s nothing underneath.

The question worth asking isn’t whether to use AI in classrooms. That ship has sailed. The question is whether we’re designing for the moment of use — the essay that gets submitted, the quiz that gets aced — or for the learning that has to survive after the session ends.

Right now, most tools are optimized for the former. The research is making a clear case for the latter.

Special thanks to Overdeck Family Foundation for sponsoring this article, the first of eight in our AI & Efficacy Editorial Research Series diving into key studies from Stanford’s AI Hub for Education Research Repository (a project by Stanford’s SCALE Initiative). Stay tuned for more!

Top Edtech Headlines

1. Hackers Deface School Login Pages Following Instructure Breach Claims

Hackers have begun defacing school login pages after claiming responsibility for another breach involving Instructure, escalating concerns about the scale and visibility of recent cyberattacks targeting education systems.

2. NYC Parents Demand AI Moratorium as Concerns Boil Over at School Board Meeting

More than 100 parents, students, and educators spoke out at a marathon New York City school board meeting, calling for a pause on AI use in schools amid growing concerns about transparency, safety, and oversight. The push for a moratorium reflects rising tension between rapid AI adoption and a lack of clear policies, with critics arguing that schools are moving too quickly without fully understanding the risks or impacts on students.

3. Google Expands AI Training Efforts by Putting Educators at the Center

Google is expanding its AI education efforts across the Asia-Pacific region with $10 million to equip 4.7 million students, teachers and workers with AI skills. The initiative emphasizes teachers as key drivers of impact, aiming to equip them with the tools and knowledge to integrate AI thoughtfully into classrooms and scale its benefits across entire learning ecosystems.

4. Education Department Prioritizes AI in Federal Grant Funding Decisions

The U.S. Department of Education is placing new emphasis on artificial intelligence in its discretionary grant programs, giving preference to proposals that expand AI literacy, support ethical use, and improve student outcomes.

Kira 2.0: Real Voices from the Launch Event

At the Kira 2.0 launch event in New York City, Sarah Morin captured rapid-fire insights from educators, administrators, and edtech leaders reacting in real time to one of the most ambitious AI learning platforms to date. Listen in to our episode on The Edtech Insiders Podcast to hear insights from:

Brandon Chitty, Executive Director of Innovations, Broken Arrow Public Schools

Brett Roer, CEO & Founder, Amplify and Elevate Innovation

Harl Roehm, CTE Teacher, Collierville High School

Jesse Lubinsky, Principal Evangelist, Adobe Education

Lance Key, Instructional Technology Coordinator, Putnam County Schools

Rachelle Dene Poth, Educator, Consultant & Attorney

Hannah Gurmankin, Special Education Teacher

Scott Brewster, Co-Founder, Hats & Ladders

I'm so glad you have a name for this and are making education aware of this. I noticed this affect when online platforms were first introduced for math when my daughter was in middle school. It appeared that she learned the content, but when it came time to test her knowledge, the learning did not stick. I'm in education and believe AI has a place, but the people designing it really need to have deep knowledge of learning, so that it doesn't just "look good."

Not sure about the claim that 78% of ChatGPT users couldn't quote their own essays ..it is presented without a citation or methodological detail